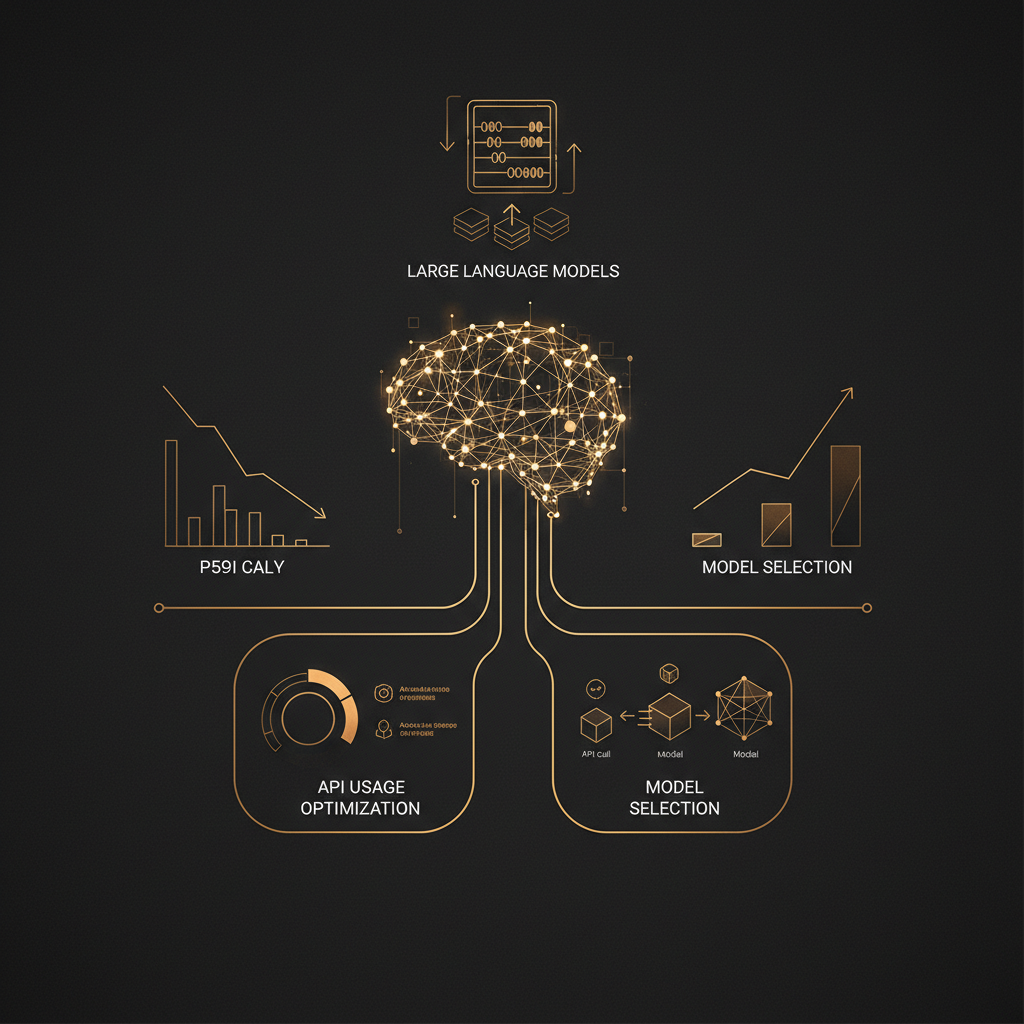

Optimising LLM Costs in Production: Practical Strategies for API Usage and Model Selection

Deploying Large Language Models (LLMs) in production offers significant business value, but managing their operational costs, particularly API usage, is crucial for profitability. This article outlines practical strategies for UK businesses to reduce LLM expenses without sacrificing performance. Key areas include intelligent prompt engineering, effective caching, strategic model selection, and robust monitoring. We discuss how to choose the right model for specific tasks, leverage smaller or fine-tuned models, and implement governance to track and control spending. By adopting these methods, organisations can ensure their AI initiatives remain efficient and cost-effective.

Clinton Onyekwere

Clinton AI Ltd

Optimising LLM Costs in Production: Practical Strategies for API Usage and Model Selection

TL;DR

Deploying Large Language Models (LLMs) in production offers significant business value, but managing their operational costs, particularly API usage, is crucial for profitability. This article outlines practical strategies for UK businesses to reduce LLM expenses without sacrificing performance. Key areas include intelligent prompt engineering, effective caching, strategic model selection, and robust monitoring. We discuss how to choose the right model for specific tasks, leverage smaller or fine-tuned models, and implement governance to track and control spending. By adopting these methods, organisations can ensure their AI initiatives remain efficient and cost-effective.

Introduction

The adoption of Large Language Models (LLMs) has progressed rapidly, moving from experimental prototypes to core components of business operations. From enhancing customer service with AI chatbots to automating content generation for e-commerce platforms and streamlining complex procurement processes in maritime logistics, LLMs are proving their ability to create measurable value. However, as these systems scale from development environments to production, the operational costs associated with their use, particularly when relying on third-party API providers, become a significant consideration.

Uncontrolled LLM API usage can quickly erode the financial benefits an AI system provides. Businesses need a clear, systematic approach to manage these expenses, ensuring that their investment in AI delivers sustainable returns. This article addresses the challenge of LLM cost optimisation, providing practical strategies for technical decision-makers and business leaders in the UK. We will explore methods for intelligent API usage, strategic model selection, and effective governance, all aimed at reducing expenditure while maintaining or improving performance and reliability in production environments.

Understanding LLM Cost Drivers

Before optimising LLM costs, it is essential to understand where these expenses originate. The primary cost drivers for LLM API usage are typically tied to the volume and complexity of interactions.

Tokenisation and Pricing Models

LLM providers like OpenAI, Anthropic, and Google charge based on "tokens." A token is not necessarily a word, but rather a chunk of text, which can be a word, part of a word, or even punctuation. For English text, one token generally equates to about four characters, or roughly 0.75 words.

Costs are usually differentiated between input tokens (the prompt sent to the model) and output tokens (the model's response). Output tokens are often more expensive than input tokens, reflecting the computational effort involved in generating text. Furthermore, different models within a provider's suite have varying prices. For example, OpenAI's GPT-4 Turbo models are significantly more expensive per token than GPT-3.5 Turbo, due to their superior reasoning capabilities and larger context windows.

Consider a scenario where a business uses GPT-4 Turbo for generating detailed product descriptions. If a prompt is 500 tokens and the generated description is 1,000 tokens, and the input cost is £0.01 per 1,000 tokens while output is £0.03 per 1,000 tokens, that single interaction costs: (500 tokens / 1,000) * £0.01 + (1,000 tokens / 1,000) * £0.03 = £0.005 + £0.03 = £0.035. Multiply this by thousands or millions of calls, and costs accumulate rapidly.

API Call Volume and Latency

The sheer volume of API calls is a direct cost driver. Each request, regardless of its token count, incurs some overhead. High-frequency applications, such as real-time customer support chatbots or systems performing rapid data analysis, can generate a substantial number of calls.

Latency, while not a direct cost driver in monetary terms, indirectly impacts operational efficiency and user experience. Longer response times can lead to increased infrastructure costs if more resources are needed to handle requests, or a poor user experience that reduces adoption and value. For example, an e-commerce platform using an LLM for product recommendations might find that slow responses deter customers, negating the value of the AI.

Model Complexity and Performance

Larger, more capable LLMs, such as GPT-4, Claude 3 Opus, or Gemini 1.5 Pro, offer superior performance in terms of reasoning, coherence, and handling complex instructions. They are often necessary for tasks requiring nuanced understanding, creative writing, or multi-step problem-solving. However, this enhanced capability comes at a higher token cost.

Conversely, smaller, faster models like GPT-3.5 Turbo or Claude 3 Haiku are much cheaper per token and offer lower latency. They are well-suited for simpler tasks such as text summarisation, basic classification, or generating short, factual responses. The challenge lies in selecting the appropriate model for each specific task, avoiding the overuse of expensive, powerful models where a more economical option would suffice. A common mistake is defaulting to the most powerful LLM for all tasks, leading to unnecessary expenditure.

Data Transfer and Storage

While less prominent for API-based LLM usage, data transfer and storage costs can become relevant in scenarios involving fine-tuning or self-hosting LLMs. Preparing large datasets for fine-tuning, storing model weights, and transferring data between compute instances can add to the overall expenditure. For businesses considering self-hosting open-source LLMs, the infrastructure costs for GPUs, memory, and networking become significant. This includes not only the initial setup but also ongoing maintenance and energy consumption.

Strategy 1: Intelligent API Usage

Optimising how your application interacts with LLM APIs can significantly reduce costs. These strategies focus on making each API call as efficient and effective as possible.

Prompt Engineering for Efficiency

The way prompts are constructed directly influences token counts and output quality. Thoughtful prompt engineering can lead to substantial savings.

Minimising Input Tokens

- Concise Instructions: Avoid verbose or redundant language in prompts. Get straight to the point. Instead of, "Could you please generate a summary of the following text, ensuring it is no more than five sentences and captures the main ideas?", try "Summarise this text in five sentences, focusing on main ideas."

- Context Management: Only include necessary information. If a system has memory of previous turns in a conversation, ensure only relevant recent exchanges are sent, rather than the entire chat history. For instance, in a customer support chatbot, summarise long conversations before sending them to the LLM for the next turn.

- Few-shot vs. Zero-shot: For some tasks, providing a few examples (few-shot prompting) can improve accuracy. However, each example adds to the input token count. Evaluate if zero-shot prompting with clear instructions yields acceptable results, or if the improved accuracy from few-shot justifies the increased token cost.

Maximising Output Utility

- Specific Output Formats: Instruct the LLM to provide responses in a structured format, such as JSON, bullet points, or a fixed number of sentences. This reduces the likelihood of the model generating excessive, irrelevant text.

- Example: For an e-commerce platform generating product attributes:

The model is then constrained to output only the requested data, avoiding conversational filler.{ "prompt": "Extract the brand, material, and key features from the following product description: 'This premium leather wallet by Acme Co. features multiple card slots, an ID window, and RFID blocking technology. Crafted from full-grain Italian leather.'", "response_format": "JSON with keys: brand, material, features" }

- Example: For an e-commerce platform generating product attributes:

- Avoiding Verbosity: Explicitly tell the model to be concise. Phrases like "Be brief," "Provide only the answer," or "No preamble" can help reduce unnecessary output tokens.

Caching and Deduplication

Many LLM requests are repetitive. Caching responses for frequently asked questions or common queries can eliminate redundant API calls, leading to significant cost savings and improved latency.

Implementing a Caching Layer

- Cache Key: Generate a unique key for each prompt, perhaps by hashing the prompt text along with any relevant system parameters (e.g., model name, temperature setting).

- Cache Store: Use an in-memory cache (like

functools.lru_cachein Python for simple cases) or a distributed cache system (like Redis or Memcached) for larger, multi-instance applications. - Cache Invalidation: Determine a strategy for when cached responses become stale. For static information, a long expiry time is acceptable. For dynamic data, a shorter expiry or event-driven invalidation might be necessary.

Example: Python with Redis Cache

import hashlib

import json

import redis

import os

# Assume REDIS_HOST and REDIS_PORT are set in environment variables

r = redis.Redis(host=os.getenv('REDIS_HOST', 'localhost'), port=int(os.getenv('REDIS_PORT', 6379)), db=0)

def get_llm_response_cached(prompt: str, model: str, temperature: float = 0.7, ttl_seconds: int = 3600):

"""

Retrieves an LLM response, checking cache first.

If not in cache, calls the LLM and stores the result.

"""

# Create a cache key from prompt and parameters

cache_key_data = {

"prompt": prompt,

"model": model,

"temperature": temperature

}

cache_key = "llm_cache:" + hashlib.sha256(json.dumps(cache_key_data, sort_keys=True).encode('utf-8')).hexdigest()

cached_response = r.get(cache_key)

if cached_response:

print(f"Cache hit for key: {cache_key}")

return json.loads(cached_response.decode('utf-8'))['response']

print(f"Cache miss for key: {cache_key}, calling LLM...")

# Simulate LLM API call (replace with actual API call)

# from openai import OpenAI

# client = OpenAI()

# response_obj = client.chat.completions.create(

# model=model,

# messages=[{"role": "user", "content": prompt}],

# temperature=temperature

# )

# llm_response = response_obj.choices[0].message.content

# Placeholder for actual LLM call

llm_response = f"LLM generated response for '{prompt}' using {model}."

# Store in cache

r.setex(cache_key, ttl_seconds, json.dumps({"response": llm_response}).encode('utf-8'))

return llm_response

# Usage example

print(get_llm_response_cached("What is the capital of France?", "gpt-3.5-turbo"))

print(get_llm_response_cached("What is the capital of France?", "gpt-3.5-turbo")) # This will be a cache hit

print(get_llm_response_cached("Tell me a fun fact about Leeds.", "gpt-3.5-turbo", ttl_seconds=60))

This simple caching mechanism can significantly reduce the number of API calls for identical prompts, especially in applications with predictable user queries or frequent internal data processing tasks.

Batching Requests

If your application needs to process multiple independent prompts, batching them into a single API call can reduce costs and improve throughput. Many LLM providers offer batching capabilities, allowing you to send a list of prompts rather than individual requests. This often comes with a reduced per-request overhead.

For example, if an e-commerce content platform needs to generate short product tags for 100 different items, sending 100 individual API calls might be less efficient than sending a single batch request containing all 100 prompts. OpenAI's Batch API, for instance, is designed for this, often offering lower pricing for batch processing compared to real-time API calls.

Consider a scenario where an education platform needs to generate summaries for 50 student essays. Instead of 50 separate requests, a batch job can process these overnight, taking advantage of potentially lower off-peak pricing and reduced per-request overheads.

Asynchronous Processing

While not directly reducing token costs, asynchronous programming improves the efficiency of your application's resource utilisation. By not blocking execution while waiting for an LLM response, your application can handle more requests concurrently or perform other tasks. This can indirectly lead to cost savings by allowing your existing infrastructure to process more work, potentially delaying the need for scaling up or reducing idle time.

For I/O-bound operations like API calls, asyncio in Python is a common pattern.

import asyncio

# from openai import OpenAI # Uncomment for actual OpenAI calls

# client = OpenAI() # Initialize client once

async def call_llm_async(prompt: str, model: str):

"""Simulates an asynchronous LLM API call."""

# In a real scenario, this would be an actual API call, e.g.:

# response = await client.chat.completions.create(

# model=model,

# messages=[{"role": "user", "content": prompt}]

# )

# return response.choices[0].message.content

await asyncio.sleep(1) # Simulate network latency

return f"Async response for: {prompt}"

async def main():

prompts = [

"Summarise the benefits of cloud computing.",

"Explain quantum entanglement simply.",

"What is the capital of Scotland?"

]

tasks = [call_llm_async(p, "gpt-3.5-turbo") for p in prompts]

responses = await asyncio.gather(*tasks)

for i, response in enumerate(responses):

print(f"Prompt {i+1}: {prompts[i][:30]}... -> {response[:50]}...")

if __name__ == "__main__":

asyncio.run(main())

This allows multiple LLM calls to be "in flight" simultaneously, reducing the total wall-clock time for processing a batch of requests.

Strategy 2: Strategic Model Selection and Management

Choosing the right LLM for each specific task is perhaps the most impactful strategy for cost optimisation. Not all tasks require the most powerful, and therefore most expensive, models.

The Right Model for the Job

LLM providers offer a range of models, each with different capabilities and pricing. Understanding these differences allows for intelligent allocation of resources.

- OpenAI:

- GPT-3.5 Turbo: Cost-effective, fast, and suitable for common tasks like summarisation, classification, and basic content generation. Often the default choice for initial development and many production workloads.

- GPT-4 Turbo (various versions): Significantly more capable for complex reasoning, creative tasks, coding, and handling large context windows. Higher cost per token. Use when accuracy, depth of understanding, or lengthy context is paramount.

- Anthropic:

- Claude 3 Haiku: Very fast and economical, designed for high-volume, less complex tasks. Good for customer service, quick data extraction.

- Claude 3 Sonnet: A balance of intelligence and speed, suitable for enterprise workloads requiring strong performance at a reasonable cost.

- Claude 3 Opus: Anthropic's most intelligent model, excelling at highly complex tasks, research, and advanced reasoning. Highest cost.

- Google:

- Gemini 1.5 Flash: A lighter, faster, and more cost-effective model, good for high-volume, low-latency applications.

- Gemini 1.5 Pro: A powerful, multimodal model with a massive context window (up to 1 million tokens), suitable for complex tasks, code generation, and analysing long documents or videos.

Model Comparison Table (Illustrative, prices change)

| Model Family | Typical Use Cases | Cost (Input/Output per 1M tokens, GBP approx.) | Key Advantages |

|---|---|---|---|

| GPT-3.5 Turbo | Summarisation, classification, chatbots, basic content generation, data extraction | £0.40 / £1.20 | Cost-effective, fast, good for many common tasks. |

| GPT-4 Turbo | Complex reasoning, creative writing, coding, multi-turn conversations, large context | £8.00 / £24.00 | Highly capable, excellent reasoning, large context window, multimodal. |

| Claude 3 Haiku | High-volume, low-latency, customer service, quick data processing | £0.20 / £1.00 | Very fast, highly economical, good for immediate responses. |

| Claude 3 Sonnet | Enterprise workloads, strong performance, code generation, data analysis | £2.20 / £11.00 | Balanced intelligence and speed, good for a wide range of business applications. |

| Claude 3 Opus | Advanced research, strategic analysis, complex problem-solving, deep reasoning | £11.00 / £55.00 | Most capable, handles highly complex, open-ended tasks with high accuracy. |

| Gemini 1.5 Flash | High-volume, low-latency, quick summaries, simple question answering | £0.28 / £0.84 | Fast, efficient, good for scaling applications with less demanding tasks. |

| Gemini 1.5 Pro | Complex multimodal tasks, large context (1M tokens), extensive document analysis | £2.80 / £8.40 | Massive context window, multimodal capabilities, strong performance on complex reasoning. |

| Open-Source (e.g., Llama 3, Mixtral) | Self-hosting, fine-tuning, specific domain tasks, privacy-sensitive | Variable (compute, expertise) | Full control, no per-token costs (after infrastructure), data privacy, customisation. |

Note: Prices are approximate and subject to change by providers. Always check the latest pricing on official websites.

Model Chaining and Specialisation

A powerful optimisation technique involves routing tasks to different models based on their complexity. This "router LLM" or "model chaining" approach ensures that expensive, powerful models are only used when absolutely necessary.

Example: A Content Generation Workflow

An e-commerce platform using AI to generate product descriptions might implement the following logic:

- Initial Classification (GPT-3.5 Turbo / Claude 3 Haiku): A lightweight model classifies the product type (e.g., "Electronics", "Apparel", "Home Goods") and identifies key attributes from raw input data. This is a simple, low-cost classification task.

- Sentiment Analysis / Tone Check (GPT-3.5 Turbo / Claude 3 Haiku): Another small model checks the sentiment or desired tone for the description (e.g., "formal", "playful", "informative").

- Description Generation (GPT-4 Turbo / Claude 3 Sonnet): Based on the classification, attributes, and tone, a more capable model generates the actual product description. This model is chosen for its creative writing and coherence.

- Review and Refinement (GPT-3.5 Turbo / Claude 3 Haiku): A final, cheaper model can check the generated description for basic grammar, spelling, and adherence to length constraints.

By chaining these models, the overall cost is significantly lower than if GPT-4 Turbo were used for every step of the process. LangChain and similar frameworks provide tools for building such multi-model workflows.

Fine-tuning Smaller Models

For highly specific, repeatable tasks within a particular domain, fine-tuning a smaller, base model can be a very effective long-term cost-saving strategy. Instead of relying on a large, general-purpose model to "learn" your specific context via prompt engineering every time, you train a smaller model to excel at that narrow task.

When to Consider Fine-tuning:

- High Volume, Repetitive Tasks: If your application performs the same type of task thousands of times a day (e.g., classifying specific types of customer queries, extracting highly structured data from domain-specific documents).

- Domain-Specific Language: When your industry uses unique terminology or jargon that general-purpose models struggle with without extensive prompting.

- Consistency and Control: Fine-tuning offers more consistent output quality and behaviour compared to prompt engineering, which can sometimes be sensitive to minor prompt changes.

- Data Privacy: For sensitive data that cannot be sent to third-party APIs, fine-tuning a model on your own infrastructure might be a requirement.

Cost Implications:

- Training Costs: Fine-tuning incurs upfront costs for data preparation, compute resources (GPUs), and developer time.

- Reduced Inference Costs: Once fine-tuned, the smaller model can perform the specific task much more cheaply per token than a large foundation model. For instance, a fine-tuned GPT-3.5 Turbo can be significantly cheaper than using GPT-4 for the same task, and often performs better on the specific domain it was trained on.

- Ownership and Control: For open-source models, fine-tuning provides full control over the model's behaviour and deployment.

Example: Fine-tuning for Maritime Procurement

A maritime procurement system, like Clinton AI's PortQuote, deals with highly specific requests for parts, services, and logistics. A general LLM might struggle with the nuances of "bunker fuel specifications" or "class society certifications" without extensive, token-heavy prompts. Fine-tuning a model on a dataset of historical procurement requests, specifications, and supplier responses would enable it to:

- Accurately extract key parameters from a procurement request (e.g., vessel name, port, item quantity, required delivery date).

- Generate precise, industry-standard language for RFQs (Requests for Quotation).

- Classify incoming supplier quotes based on specific criteria.

This fine-tuned model would perform these tasks faster, more accurately, and at a fraction of the cost per inference compared to repeatedly prompting a large, general model.

Quantisation and Pruning for On-Device/Local Deployment

For certain applications, especially those requiring extreme privacy, low latency, or offline capabilities, deploying smaller, open-source LLMs locally or on-device can be a viable strategy. Quantisation and pruning are techniques to make these models more efficient.

- Quantisation: Reduces the precision of the model's weights (e.g., from 32-bit floating-point to 8-bit integers). This significantly shrinks the model size and speeds up inference, often with a minimal drop in accuracy. Tools like

bitsandbytesorllama.cppfacilitate quantisation. - Pruning: Removes redundant connections or neurons from the neural network. This reduces the model's complexity and size, but requires careful experimentation to maintain performance.

While these techniques introduce their own operational complexities (managing infrastructure, updates), they eliminate per-token API costs and offer complete control over data. This can be particularly attractive for UK businesses with stringent data sovereignty requirements or those operating in environments with limited internet connectivity.

Strategy 3: Monitoring, Analytics, and Governance

Effective cost optimisation is an ongoing process that requires visibility and control. Implementing robust monitoring, analytics, and governance frameworks is crucial for sustained cost reduction.

Cost Tracking and Attribution

You cannot optimise what you do not measure. Detailed tracking of LLM usage and associated costs is fundamental.

- API Provider Dashboards: All major LLM providers offer dashboards to monitor usage and expenditure. While useful for an overview, they often lack granular detail for specific application features or user groups.

- Custom Logging and Analytics: Implement logging within your application to capture every LLM API call, including:

- Timestamp

- Model used (e.g.,

gpt-3.5-turbo,gpt-4) - Input token count

- Output token count

- Application feature or user ID that initiated the call

- Cost calculation based on current pricing

- Latency

- Success/failure status

- Integration with Observability Platforms: Send these logs to a centralised observability platform (e.g., Datadog, Splunk, ELK stack). This allows for custom dashboards, alerts, and detailed analysis.

- LangChain Callbacks: Frameworks like LangChain offer callback mechanisms that can be used to log LLM interactions automatically.

Example: Simple Python Logging

import logging

import time

logging.basicConfig(level=logging.INFO, format='%(asctime)s - %(levelname)s - %(message)s')

def record_llm_call(model_name: str, input_tokens: int, output_tokens: int, duration_ms: int, feature_name: str):

"""Logs details of an LLM API call."""

# Placeholder for actual pricing logic

# Assume illustrative costs: input £0.50/1M, output £1.50/1M

input_cost_per_token = 0.50 / 1_000_000

output_cost_per_token = 1.50 / 1_000_000

estimated_cost = (input_tokens * input_cost_per_token) + (output_tokens * output_cost_per_token)

logging.info(

f"LLM_CALL: Model={model_name}, InputTokens={input_tokens}, "

f"OutputTokens={output_tokens}, Cost=£{estimated_cost:.4f}, "

f"Duration={duration_ms}ms, Feature={feature_name}"

)

# Simulate an LLM call within an application feature

start_time = time.time()

# ... actual LLM call happens here ...

end_time = time.time()

record_llm_call(

model_name="gpt-4-turbo",

input_tokens=1500,

output_tokens=3000,

duration_ms=int((end_time - start_time) * 1000),

feature_name="product_description_generator"

)

This granular data allows businesses to identify which features or parts of their application are the primary cost sinks, enabling targeted optimisation efforts. For example, if the "product description generator" feature is using GPT-4 Turbo for basic summarisation, it indicates an opportunity to switch to GPT-3.5 Turbo.

Usage Policies and Quotas

To prevent unexpected cost spikes, establish clear usage policies and implement quotas.

- Define Budgets: Set monthly or quarterly budgets for LLM API usage for different projects or teams.

- Implement Quotas: Use API provider features (if available) or build custom middleware to enforce limits on API calls or token usage per user, per application, or per time period.

- Alerting: Configure alerts to notify relevant stakeholders when usage approaches predefined thresholds. This allows for proactive intervention before budgets are exceeded.

- Cost Centre Attribution: If possible, attribute LLM costs to specific departments or projects. This fosters accountability and encourages teams to optimise their usage. For a large enterprise, knowing that the "Marketing Department's content creation tool" is responsible for £X of LLM spend can drive internal optimisation initiatives.

A/B Testing and Optimisation Loops

LLM optimisation is an iterative process. Continuously experiment with different prompts, models, and strategies, and measure their impact on both performance and cost.

- Prompt A/B Testing: Test different prompt variations for the same task. For example, compare a concise prompt against a more detailed one. Measure token usage, output quality (using human evaluation or automated metrics), and overall cost.

- Model A/B Testing: For a given task, compare the performance and cost of different LLMs (e.g., GPT-3.5 Turbo vs. Claude 3 Sonnet). This helps in making data-driven decisions about model selection.

- Performance Metrics: Define clear metrics for success beyond just cost. These might include:

- Accuracy: How often the LLM provides correct or desired information.

- Relevance: How well the output matches the prompt's intent.

- Coherence/Fluency: The readability and naturalness of the generated text.

- Latency: Response time.

- Feedback Loops: Establish mechanisms for gathering feedback from users or internal reviewers on the quality of LLM outputs. Use this feedback to refine prompts, fine-tune models, or adjust routing logic.

By integrating these monitoring and governance practices, businesses can maintain a dynamic and responsive approach to LLM cost management, ensuring that their AI investments remain financially sound and strategically aligned with business goals.

Key Takeaways

- Understand Token Costs: Recognise that LLM costs are primarily driven by input and output tokens, with output often being more expensive. Different models have vastly different token pricing.

- Prioritise Prompt Efficiency: Craft concise prompts, manage context carefully, and instruct for specific output formats to minimise token usage.

- Implement Caching: Cache common or repetitive LLM responses to avoid redundant API calls, significantly reducing costs and improving latency.

- Batch Requests: Leverage batching capabilities for multiple independent tasks to reduce per-request